Getty Images

OpenAI officials say that the ChatGPT histories a user reported result from his ChatGPT account being compromised. The unauthorized logins came from Sri Lanka, an Open AI representative said. The user said he logs into his account from Brooklyn, New York.

“From what we discovered, we consider it an account take over in that it’s consistent with activity we see where someone is contributing to a ‘pool’ of identities that an external community or proxy server uses to distribute free access,” the representative wrote. “The investigation observed that conversations were created recently from Sri Lanka. These conversations are in the same time frame as successful logins from Sri Lanka.”

The user, Chase Whiteside, has since changed his password, but he doubted his account was compromised. He said he used a nine-character password with upper- and lower-case letters and special characters. He said he didn’t use it anywhere other than for a Microsoft account. He said the chat histories belonging to other people appeared all at once on Monday morning during a brief break from using his account.

OpenAI’s explanation likely means the original suspicion of ChatGPT leaking chat histories to unrelated users is wrong. It does, however, underscore the site provides no mechanism for users such as Whiteside to protect their accounts using 2FA or track details such as IP location of current and recent logins. These protections have been standard on most major platforms for years.

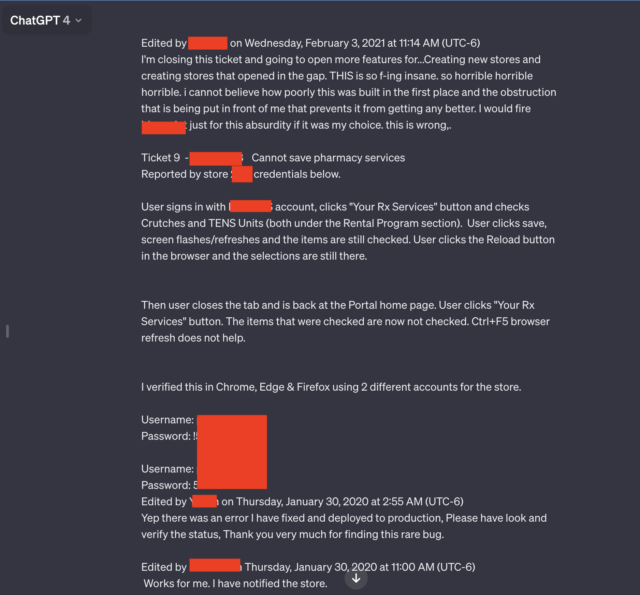

Original story: ChatGPT is leaking private conversations that include login credentials and other personal details of unrelated users, screenshots submitted by an Ars reader on Monday indicated.

Two of the seven screenshots the reader submitted stood out in particular. Both contained multiple pairs of usernames and passwords that appeared to be connected to a support system used by employees of a pharmacy prescription drug portal. An employee using the AI chatbot seemed to be troubleshooting problems they encountered while using the portal.

“Horrible, horrible, horrible”

“THIS is so f-ing insane, horrible, horrible, horrible, i cannot believe how poorly this was built in the first place, and the obstruction that is being put in front of me that prevents it from getting better,” the user wrote. “I would fire [redacted name of software] just for this absurdity if it was my choice. This is wrong.”

Besides the candid language and the credentials, the leaked conversation includes the name of the app the employee is troubleshooting and the store number where the problem occurred.

The entire conversation goes well beyond what’s shown in the redacted screenshot above. A link Ars reader Chase Whiteside included showed the chat conversation in its entirety. The URL disclosed additional credential pairs.

The results appeared Monday morning shortly after reader Whiteside had used ChatGPT for an unrelated query.

“I went to make a query (in this case, help coming up with clever names for colors in a palette) and when I returned to access moments later, I noticed the additional conversations,” Whiteside wrote in an email. “They weren’t there when I used ChatGPT just last night (I’m a pretty heavy user). No queries were made—they just appeared in my history, and most certainly aren’t from me (and I don’t think they’re from the same user either).”

Other conversations leaked to Whiteside include the name of a presentation someone was working on, details of an unpublished research proposal, and a script using the PHP programming language. The users for each leaked conversation appeared to be different and unrelated to each other. The conversation involving the prescription portal included the year 2020. Dates didn’t appear in the other conversations.

The episode, and others like it, underscore the wisdom of stripping out personal details from queries made to ChatGPT and other AI services whenever possible. Last March, ChatGPT-maker OpenAI took the AI chatbot offline after a bug caused the site to show titles from one active user’s chat history to unrelated users.

In November, researchers published a paper reporting how they used queries to prompt ChatGPT into divulging email addresses, phone and fax numbers, physical addresses, and other private data that was included in material used to train the ChatGPT large language model.

Concerned about the possibility of proprietary or private data leakage, companies, including Apple, have restricted their employees’ use of ChatGPT and similar sites.

As mentioned in an article from December when multiple people found that Ubiquiti’s UniFi devices broadcasted private video belonging to unrelated users, these sorts of experiences are as old as the Internet is. As explained in the article:

The precise root causes of this type of system error vary from incident to incident, but they often involve “middlebox” devices, which sit between the front- and back-end devices. To improve performance, middleboxes cache certain data, including the credentials of users who have recently logged in. When mismatches occur, credentials for one account can be mapped to a different account.

This story was updated on January 30 at 3:21 pm ET to reflect OpenAI’s investigation into the incident.