On Tuesday, Intel revealed a new AI accelerator chip called Gaudi 3 at its Vision 2024 event in Phoenix. With strong claimed performance while running large language models (like those that power ChatGPT), the company has positioned Gaudi 3 as an alternative to Nvidia’s H100, a popular data center GPU that has been subject to shortages, though apparently that is easing somewhat.

Compared to Nvidia’s H100 chip, Intel projects a 50 percent faster training time on Gaudi 3 for both OpenAI’s GPT-3 175B LLM and the 7-billion parameter version of Meta’s Llama 2. In terms of inference (running the trained model to get outputs), Intel claims that its new AI chip delivers 50 percent faster performance than H100 for Llama 2 and Falcon 180B, which are both relatively popular open-weights models.

Intel is targeting the H100 because of its high market share, but the chip isn’t Nvidia’s most powerful AI accelerator chip in the pipeline. Announcements of the H200 and the Blackwell B200 have since surpassed the H100 on paper, but neither of those chips is out yet (the H200 is expected in the second quarter of 2024—basically any day now).

Meanwhile, the aforementioned H100 supply issues have been a major headache for tech companies and AI researchers who have to fight for access to any chips that can train AI models. This has led several tech companies like Microsoft, Meta, and OpenAI (rumor has it) to seek their own AI-accelerator chip designs, although that custom silicon is typically manufactured by either Intel or TSMC. Google has its own line of tensor processing units (TPUs) that it has been using internally since 2015.

Given those issues, Intel’s Gaudi 3 may be a potentially attractive alternative to the H100 if Intel can hit an ideal price (which Intel has not provided, but an H100 reportedly costs around $30,000–$40,000) and maintain adequate production. AMD also manufactures a competitive range of AI chips, such as the AMD Instinct MI300 Series, that sell for around $10,000–$15,000.

Gaudi 3 performance

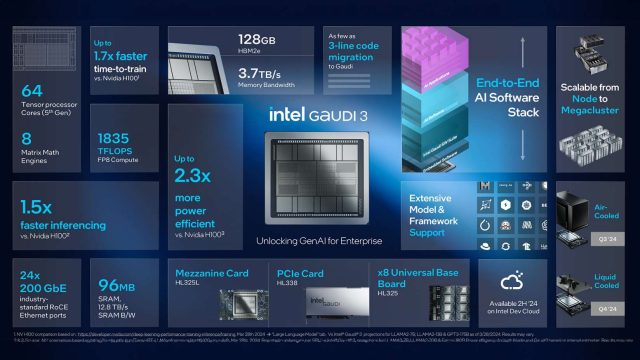

Intel says the new chip builds upon the architecture of its predecessor, Gaudi 2, by featuring two identical silicon dies connected by a high-bandwidth connection. Each die contains a central cache memory of 48 megabytes, surrounded by four matrix multiplication engines and 32 programmable tensor processor cores, bringing the total cores to 64.

The chipmaking giant claims that Gaudi 3 delivers double the AI compute performance of Gaudi 2 using 8-bit floating-point infrastructure, which has become crucial for training transformer models. The chip also offers a fourfold boost for computations using the BFloat 16-number format. Gaudi 3 also features 128GB of the less expensive HBMe2 memory capacity (which may contribute to price competitiveness) and features 3.7TB of memory bandwidth.

Since data centers are well-known to be power hungry, Intel emphasizes the power efficiency of Gaudi 3, claiming 40 percent greater inference power-efficiency across Llama 7B and 70B parameters, and Falcon 180B parameter models compared to Nvidia’s H100. Eitan Medina, chief operating officer of Intel’s Habana Labs, attributes this advantage to Gaudi’s large-matrix math engines, which he claims require significantly less memory bandwidth compared to other architectures.

Gaudi vs. Blackwell

Last month, we covered the splashy launch of Nvidia’s Blackwell architecture, including the B200 GPU, which Nvidia claims will be the world’s most powerful AI chip. It seems natural, then, to compare what we know about Nvidia’s highest-performing AI chip to the best of what Intel can currently produce.

For starters, Gaudi 3 is being manufactured using TSMC’s N5 process technology, according to IEEE Spectrum, narrowing the gap between Intel and Nvidia in terms of semiconductor fabrication technology. The upcoming Nvidia Blackwell chip will use a custom N4P process, which reportedly offers modest performance and efficiency improvements over N5.

Gaudi 3’s use of HBM2e memory (as we mentioned above) is notable compared to the more expensive HBM3 or HBM3e used in competing chips, offering a balance of performance and cost-efficiency. This choice seems to emphasize Intel’s strategy to compete not only on performance but also on price.

As far as raw performance comparisons between Gaudi 3 and the B200, that can’t be known until the chips have been released and benchmarked by a third party.

As the race to power the tech industry’s thirst for AI computation heats up, IEEE Spectrum notes that the next generation of Intel’s Gaudi chip, code-named Falcon Shores, remains a point of interest. It also remains to be seen whether Intel will continue to rely on TSMC’s technology or leverage its own foundry business and upcoming nanosheet transistor technology to gain a competitive edge in the AI accelerator market.